Ocean-1, a compact 7B Ocean model, has triumphed over GPT-4 in retrieval-augmented generation (RAG), boasting an impressive 100x increase in cost-effectiveness. Several months back, Ocean-1 emerged as the pioneering foundational model tailored explicitly for contact centers, showcasing superior out-of-the-box capabilities, adeptness in following instructions, and unparalleled cost-effectiveness and latency. Achieving these feats was possible through meticulous domain-specific fine-tuning applied to robust base Large Language Models (LLMs). Essentially, the model initially excels in fundamental English language tasks before specializing in becoming an exceptional sales or customer service agent.

Since Ocean’s debut, significant strides have been made in advancing open-source base LLMs like Mistral 7B, Phi-2, and Yi-34B. Notably, the recent unveiling of the Mixtral 8x7B model, employing a mixture-of-experts (MoE) approach, has outperformed ChatGPT 3.5 across various tasks, showcasing enhanced accuracy.

In this discourse, we delve into harnessing these advancements alongside high-quality domain-specific data, elucidating our journey of fine-tuning the Mixtral model. Our efforts have culminated in a model that surpasses GPT-4 in retrieval-augmented generation, showcasing the transformative power of these evolutions in the field.

Retrieval-Augmented Generation (RAG)

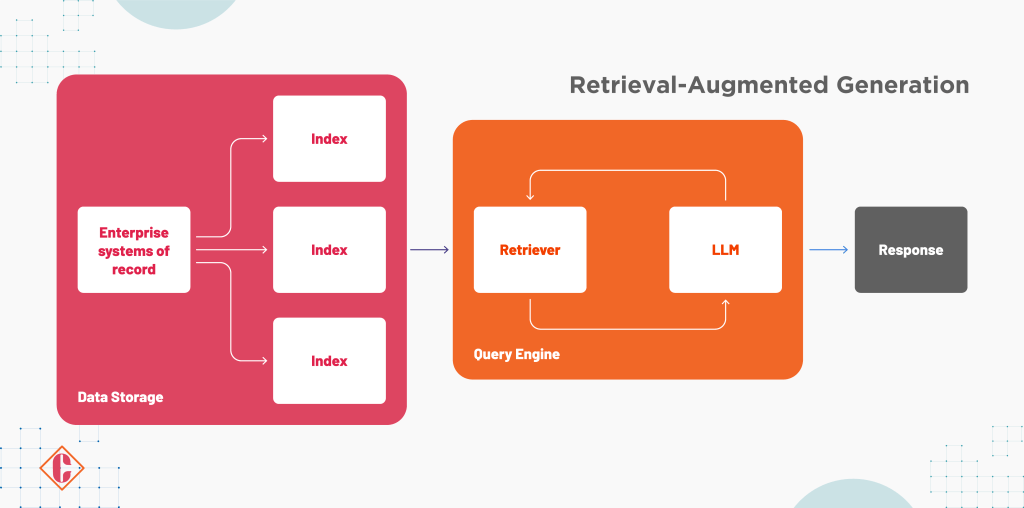

Cresta’s cutting-edge knowledge assist feature employs Retrieval-Augmented Generation (RAG), a sophisticated technology that revolutionizes response generation during human-to-human conversations. This real-time AI listens to conversations, identifies moments requiring knowledge assistance, conducts automated knowledge base searches, and utilizes LLM (Large Language Models) to generate precise responses.

The Power of RAG

Underpinning Cresta’s operations, RAG precisely delivers contextual information to enhance LLM’s comprehension. While leveraging powerful LLMs such as GPT-3.5 or GPT-4 in each stage of the inference process holds promise, it poses significant challenges.

- Cost Considerations: Leveraging GPT-4, at 0.03/1k prompt tokens, for multiple stages could amount to substantial expenses, impacting customers at scale.

- Latency Sensitivity: Real-time applications demand low latency. Reduced latency directly correlates with user adoption, emphasizing the need for swift knowledge suggestions.

Custom Ocean Models for RAG

Custom Ocean Models for RAG

Cresta embarked on crafting custom Ocean models for RAG, realizing that generic LLMs might not suffice. The Ocean model training encompasses synthetic and customer-specific data to optimize performance.

- Synthetic Data: Using web scraping and GPT-4, Cresta crafts diverse FAQs, enhancing LLMs’ ability to contextualize retrieved documents and generate relevant responses.

- Customer-Specific Data: Query extraction models fine-tune LLMs by analyzing conversations, aiding continuous learning from the evolving knowledge base.

Training and Evaluation

Utilizing Mistral and Mixtral models with LoRA and Axolotl, Cresta’s trained models undergo evaluation against human-generated responses. Mixtral 8x7B showcases substantial accuracy improvements compared to GPT-4 and Mistral 7B, especially when trained on the same dataset.

Feedback Loop for Constant Improvement

Leveraging LoRA-based architecture enables Cresta to serve individual adapters per customer, facilitating ongoing model enhancements based on real-time feedback. Thumbs-up and down signals guide model critique and assist in gathering more training data for continual refinement.

Serving at Scale with Fireworks AI

Partnering with Fireworks AI, Cresta efficiently serves mixtral/mistral-based Ocean models. This partnership significantly reduces costs (up to 100x compared to GPT-4) by scaling LoRA adapters and utilizing a single base model cluster, ensuring enhanced efficiency without the need for separate models for each customer or use case.

Final Note

The significance of adaptive leadership in navigating complex macroeconomic landscapes has prompted APAC leaders to devise strategies to enhance employee skills and mitigate business risks. According to a survey encompassing 375 business leaders across APAC regions like Indonesia, Malaysia, Singapore, and others, certain pivotal strategies have emerged as priorities:

- Developing forecasts for business performance under various scenarios (54%)

- Repurposing supply chain strategies (46%)

- Implementing operational cost reductions (45%)

In recognizing the critical role of leaders amidst ongoing challenges, specific leadership traits were highlighted for effective navigation through economic and technological disruptions. Collaboration, trust-building, and open engagement with employees and stakeholders ranked as crucial (82%), followed by agility in decision-making (89%) and humility, denoting self-awareness of limitations and a willingness to collaborate with skilled individuals (81%).

The survey encompassed leaders from diverse sectors, including financial services, telecom, e-commerce, and utilities, spanning technical roles like CTOs and CIOs and non-technical roles such as CEOs and COOs. Supplementary insights were gathered through interviews with business leaders, reinforcing the comprehensive nature of the study.

FAQs

1. What are Ocean-1 and Mixtral models, and how do they differ from GPT-4?

Ocean-1 is a specialized model initially tailored for contact centers, excelling in fundamental English language tasks and later specializing in sales or customer service. Mixtral is a recent model that outperformed ChatGPT 3.5 and uses a mixture-of-experts (MoE) approach. These models showcase advancements in retrieval-augmented generation (RAG) and cost-effectiveness compared to GPT-4.

2. What is Retrieval-Augmented Generation (RAG), and how does Cresta leverage it?

RAG is a technology employed by Cresta’s knowledge assist feature, integrating AI into human-to-human conversations. It listens to conversations, conducts knowledge base searches, and utilizes LLMs to generate precise responses, enhancing comprehension and accuracy.

3. What leadership strategies emerged from the APAC study conducted among business leaders?

Strategies such as forecasting business performance under scenarios, repurposing supply chain strategies, and implementing operational cost reductions emerged as priorities. Leadership traits like collaboration, agility in decision-making, and humility were highlighted as crucial in navigating economic and technological disruptions.

Custom Ocean Models for RAG

Custom Ocean Models for RAG