Do you actually know what your AI is doing?

Every enterprise CIO is trying to answer this question. And when asked, the honest answer that pops, is no.

AI pilots are proliferating. Business units are deploying tools without IT’s knowledge. Agents are taking actions across live systems. And somewhere in the organization, a board member or general counsel has been quietly asking the same question.

More than 50% of the organizations have only moderate or limited coverage for technology for technology, third-party, and model risks within their AI governance programs.

This is the governance gap, and it is widening faster than most CIOs realize, because the problem is no longer limited to a chatbot answering employee questions. In 2026, AI agents are reading documents, calling APIs, updating records, routing customer interactions, and making consequential decisions autonomously. The stakes of ungoverned AI have fundamentally changed. And so has the infrastructure required to govern it.

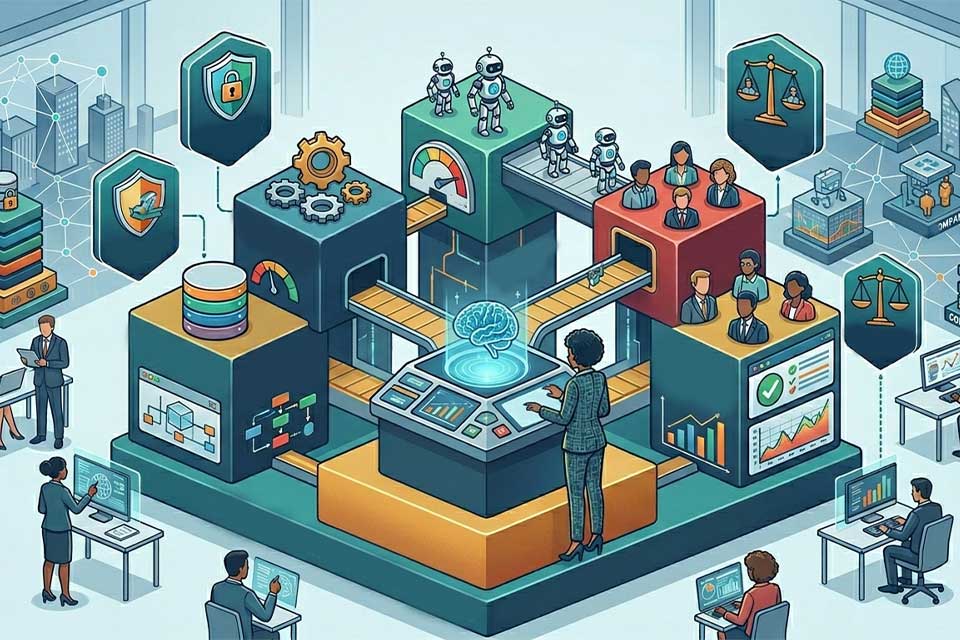

Most of these organizations develop a policy document but this document is not a government stack. It is simply a statement of intent. A government stack is an operational infrastructure, such as the tools, controls, model registries, explainability layers, audit frameworks, and risk scoring systems, all of which combinedly make the intent enforceable at scale, in real time across every AI system running in your organization.

Layer One: The AI Inventory

You cannot govern what you cannot see. The first and most foundational layer of the AI governance stack is a comprehensive, continuously updated inventory of every AI system operating inside your enterprise including the ones your business units deployed without telling IT.

Platforms like Collibra, Holistic AI, and DataRobot AI Governance offer registry functionality as part of broader governance suites. What matters more than the specific tool is the discipline: every AI deployment, whether built internally or procured from a vendor, must enter the registry before it touches production data.

Layer Two: AI Risk Scoring and Classification

Not all AI systems carry the same risk. A content summarization tool used internally by marketing carries fundamentally different risk from an AI agent making credit decisions for loan applicants or an autonomous system routing patient care workflow. Treating every AI deployment with identical governance overhead is as counterproductive as treating none of them seriously.

The AI risk scoring layer exists to tier models by their operational risk profile, then apply proportionate governance controls to each tier.

Layer Three: Explainability Infrastructure

Explainability is the capability that separates AI governance that can withstand scrutiny from AI governance that looks good on paper. It is also, consistently, the layer that enterprise organizations build last and need first.

The OCC’s Model Risk Management Guidance (SR 11-7) in the US and the EU AI Act’s Article 10 obligations in Europe both require financial institutions and high-risk AI operators to demonstrate that AI-driven decisions can be explained to regulators and customers. “The algorithm decided” is not an explanation. For a credit denial, a fraud flag, or a clinical recommendation, a regulator or an appeals process needs to trace the decision back to the data inputs that drove it, the model logic that weighted them, and the output threshold that triggered the action.

Also Read: CIO Influence Interview With Jake Mosey, Chief Product Officer at Recast

Layer Four: Continuous Model Monitoring and Drift Detection

We talk about models decay in theory but it is way beyond an operational certainty. A fraud detection model trained on 2023 transaction patterns may misclassify 2026 fraud patterns that didn’t exist in its training data. A credit scoring model calibrated to a pre-inflationary economy may systematically mis-score borrowers in a changed rate environment. Model drift happens where a model’s behavior diverges from its intended performance as the real-world distribution it operates in changes and can fundamentally alter a model’s risk profile almost overnight without any change being made to the model itself.

The monitoring layer of the AI governance stack must track four dimensions in real time: performance metrics (accuracy, precision, recall relative to baseline), data distribution shifts (changes in input data statistics that may signal distribution drift), output distribution shifts (changes in decision patterns that may signal model behavior change), and fairness metrics across demographic subgroups (ensuring that drift doesn’t disproportionately degrade performance for specific populations).

Layer Five: The Three Lines of Defense for AI

The operational governance model that best fits the complexity of enterprise AI in 2026 is the three lines of defense framework, adapted from financial risk management.

The first line consists of the AI product teams are the builders, deployers, and business owners. They own day-to-day quality, document model behavior, flag anomalies, and maintain the governance artifacts required by the registry.

The second line consists of risk, compliance, legal, and security teams. They define policies, review high-risk use cases before deployment, set the parameters of the risk scoring framework, and ensure that controls exist for sensitive workflows.

The third line is internal audit validating that governance works, that evidence exists, and that the organization can explain what happened and why to any external party that asks.

Wrapping up: The Honest Reality for CIOs

The AI governance stack is not built in a quarter. It requires a sequenced build: inventory and model registry first, because without visibility nothing else is possible. Risk scoring and classification second, because without tiering, governance resources are misallocated. Explainability infrastructure third, because regulatory deadlines are fixed. Drift monitoring fourth, because models that pass governance at launch can fail governance six months later. And the organizational governance structure continuously, because the tools only work if the humans using them have clear accountability.

The organizations that will scale AI agents safely in 2026 and 2027 are not the ones with the most sophisticated models. They are the ones with the governance infrastructure to know what their models are doing, to prove it to a regulator, and to intervene before a drifting agent causes harm they cannot explain and cannot reverse.

Catch more CIO Insights: CIOs as Ecosystem Architects: Designing Partnerships, APIs, And Digital Platforms

[To share your insights with us, please write to psen@itechseries.com ]